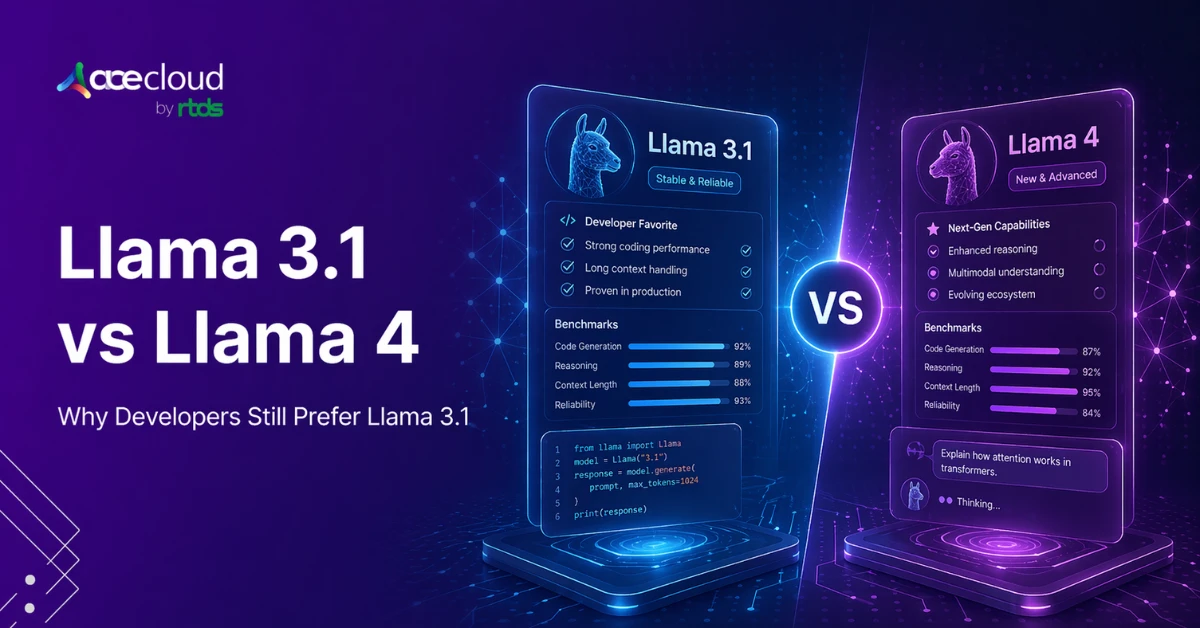

Llama 3.1 vs Llama 4 is no longer a simple version comparison. For engineers and startups, it has become a practical test of whether a newer model improves production workflows or simply looks stronger in launch materials and benchmark summaries. Llama 4 is a multimodal Mixture-of-Experts family and positioned it as a major step forward in capability and efficiency. Yet many developers still view Llama 3.1as the more dependable choice for text-first coding work because it feels steadier in instruction following, code editing and repeatability.

That tension shows up in developer discussions. In anecdotal developer discussions, some users said Llama 3.x dense text-first models, including Llama 3.1 405B and Llama 3.3 70B, felt more reliable than Llama 4 Maverick in everyday coding loops. Keep that as anecdotal workflow feedback, not as an apples-to-apples benchmark conclusion about Llama 3.1 alone. The point is not that Llama 4 is universally worse. It is that benchmark wins do not always translate into lower friction, fewer bad edits or better production confidence.

In this blog, we examine why some teams still prefer Llama 3.1, where Llama 4 genuinely advances the model family, and how to evaluate both based on stability, cost and deployment fit.

Comparison Between Llama 3.1 vs Llama 4

The table below highlights where Llama 3.1 feels stronger in trust, stability, and ecosystem maturity, and where Llama 4 clearly pushes the family forward in multimodality, context, and benchmark breadth.

| Dimension | Llama 3.1 (405B) | Llama 4 (Maverick/Scout) |

|---|---|---|

| Architecture | Dense decoder-only Transformer, easier to reason about and fine-tune | Mixture-of-Experts (MoE) family: Scout = 17B active / ~109B total / 16 experts; Maverick = 17B active / ~400B total / 128 experts; both are natively multimodal and operationally more complex than Llama 3.1’s dense text-only architecture |

| Benchmark trust | Cleaner public release with technical report and transparent documentation | Benchmark controversy after Meta used an experimental LM Arena variant, which raised trust concerns |

| Real-world coding | Often preferred for text-first coding workflows because of mature ecosystem and dense behavior | Strong official benchmark scores, but mixed developer feedback on instruction-following and coding friction |

| Fine-tuning ease | Mature LoRA and QLoRA ecosystem, especially for smaller variants like 8B and 70B | Growing support, but MoE and multimodal serving increase complexity |

| Context window | 128K context window | Official model-level claims: Scout up to 10M context, Maverick up to 1M for instruct variants; practical deployment limits and usable long-context quality vary by stack and platform, and some hosted deployments expose lower limits than the headline model claim |

| Community trust | Cleaner launch narrative and longer ecosystem maturity | Launch controversy and later Meta AI reorganization created caution among some buyers |

| Multimodal support | Text-only | Native text and image input |

| Cost efficiency | Simpler serving path for teams focused on text and code | Active-parameter efficiency can help, but infrastructure needs are higher |

| Transparency | Public technical report and detailed model cards | Model cards and release blog, but no comparable public technical report at launch |

Key Takeaways:

- Llama 3.1 is simpler, denser and easier to trust for many text-first coding workflows.

- Llama 4 adds real advances in multimodality, multilingual scale and benchmark breadth.

- For coding-heavy production use, stability and workflow fit may matter more than benchmark visibility.

- For multimodal or long-context workloads, Llama 4 may be the stronger strategic choice.

Why Did Llama 4’s Launch Raise More Questions Than Confidence?

Llama 4 did not enter the market with a simple ‘better model’ narrative. It arrived with a benchmark controversy that changed how many engineers interpreted its claims.

How did the benchmark controversy hurt trust?

A major issue surfaced when Meta’s LM Arena entry used an experimental ‘Llama-4-Maverick-03-26-Experimental’ variant optimized for conversationality rather than the vanilla public release, which weakened confidence that the leaderboard story matched the model most developers could actually deploy. That created an immediate trust gap because engineers were no longer just evaluating model quality. They were questioning whether the benchmark narrative reflected the actual release.

That created an immediate trust gap. Engineers were not just comparing models anymore. They were questioning whether the benchmark story reflected the actual product.

That kind of mismatch matters. Once developers feel benchmark positioning is overly optimized for perception, every marketing claim becomes harder to trust. For technical buyers, credibility is part of performance.

Why did Llama 3.1’s launch feel more credible?

Llama 3.1 launched in a much cleaner and more legible way. Meta’s public materials made the release easier to evaluate on its own terms: 8B, 70B and 405B variants, 128K context, text-only architecture, and clear documentation around training scale and multilingual support. That did not make Llama 3.1 universally better, but it did make the launch easier to trust.

For engineering teams, that counts. A model is not only judged by benchmark scores. It is judged by how believable the release feels when real deployment decisions are on the line.

What Makes Llama 3.1 Feel More Stable?

This is where the discussion moves from trust to engineering reality. Part of Llama 3.1’s appeal comes from the fact that it feels more familiar, more mature and easier to work with.

Why do dense models still feel safer?

Llama 3.1 is a dense, text-only autoregressive transformer family. Llama 4 shifts the family to MoE, with Scout at 17B active and about 109B total parameters and Maverick at 17B active and about 400B total. Dense models are not automatically better, but they still carry a practical advantage: teams understand their serving patterns, quantization behavior, and failure modes better.

That familiarity matters in production. A model that is easier to troubleshoot and adapt often feels more reliable than a newer model that is broader on paper but more complex operationally.

How did MoE change the deployment equation?

Meta’s Llama 4 materials position MoE to get large-model quality with lower active-parameter inference cost, and that benefit is real for the right workloads. But the architecture also changes the deployment equation. Llama 4 brings multimodal inputs, bigger context ambitions, and heavier serving assumptions.

Meta’s llama-models repo says Llama 4 models require at least four GPUs for full BF16 inference. Separately, Meta and Hugging Face position Scout as able to fit on a single H100 only with quantization, while Maverick FP8 can fit on a single H100 DGX host. That makes Llama 4 materially heavier to serve than a simpler dense text-first deployment path. For teams that mainly want a reliable text-and-code workhorse, that can feel like a larger operational jump than they need.

Where Does Llama 4 Disappoint Coding Workflows?

This is the part that keeps the debate alive. Officially, Llama 4 looks strong. But in real coding workflows, some developers say the experience feels worse than expected.

Why do some developers prefer smaller or older models?

The core complaint is not that Llama 4 knows less. It is that it can feel less dependable inside real coding loops. Third-party criticism after launch repeatedly described Maverick and Scout as underwhelming relative to expectation, especially for coding and long-context tasks.

Prompt Injection’s December 2025 critique called Scout’s long-context behavior poor and cited weak coding performance for Maverick in external testing. Even if not every outside benchmark should be treated as definitive, the broader pattern is consistent: some developers felt the public release did not live up to the launch narrative.

How do instruction misses affect coding quality?

Instruction misses matter because coding users do not judge models like benchmark authors do. They care about whether the model stays inside edit boundaries, follows constraints, and avoids turning a simple patch into a repair cycle. Meta’s own instruction-tuned table shows Maverick ahead of Llama 3.1 405B on LiveCodeBench pass@1, 43.4 versus 27.7 and ahead on MMLU Pro, 80.5 versus 73.4, and GPQA Diamond, 69.8 versus 49.0.

But those official wins do not automatically settle the user experience question. A model can score better overall and still feel worse in day-to-day coding if it introduces more friction. That tension is the heart of the debate.

Why Does Llama 3.1 Still Lead in Fine-Tuning and Ecosystem Maturity?

For many teams, the real winner is not the model with the boldest release. It is the model that is easier to adapt, cheaper to experiment with, and better supported by the ecosystem.

Why is Llama 3.1 easier to customize?

Llama 3.1 benefits from time, family breadth, and architectural familiarity. Since July 2024, the family has had more time to accumulate quantization recipes, LoRA and QLoRA workflows, deployment patterns, and fine-tuning guides, especially for the 8B and 70B variants. That makes the broader Llama 3.1 ecosystem easier to customize, even if the 405B model itself remains a heavyweight option.

Llama 3.1 emphasized practical adaptation, including 4-bit quantization and QLoRA for smaller variants, while Meta’s own materials framed the family as suitable for multilingual dialogue, coding, and tool use. For teams, that kind of ecosystem maturity is not a side benefit. It is part of the product.

What makes Llama 4 harder to serve today?

Llama 4 does have growing ecosystem support, but it still feels newer and heavier. Its MoE design, multimodal direction, and more demanding serving profile make it less straightforward for teams that just want a reliable text-and-code workhorse.

That does not make Llama 4 a bad choice. It just raises the bar. Teams need a stronger reason to adopt the extra complexity.

Is Llama 4’s Long-Context Story More Hype Than Utility?

Llama 4’s long-context positioning was one of its biggest launch highlights. But large context windows sound more impressive in marketing than they often feel in day-to-day production use.

Does a bigger context window mean better performance?

Not automatically. On paper, the jump is massive: Llama 3.1 is a 128K-context family, while Meta positions Llama 4 Scout at up to 10M context and Maverick instruct at up to 1M. But the long-context results shown in the public Llama 4 model cards are not at 1M or 10M; they are reported on 128K long-context evaluations, so the headline context claims should not be treated as proof of equal real-world quality at those extreme. But supported context and usable context are not the same thing. External evaluations quickly challenged the practical value of those headline numbers.

Prompt Injection, a third-party critical analysis rather than a vendor benchmark source, reported Scout at 15.6% accuracy at 128K tokens and Maverick at 28.1% on the same long-context comprehension task, versus a reported 90.6% for Gemini 2.5 Pro. These numbers are best understood as external criticism, not official benchmark results, but they reinforce the broader point that a large context window only matters if the model can use it effectively.

Why can Llama 3.1’s smaller window be more practical?

Llama 3.1’s 128K window is much smaller, but for many production systems, it is already enough. More importantly, it is easier to trust and easier to integrate into workflows that are already built around it.

In practice, teams usually benefit more from a dependable context range than from a giant headline number they may never fully use.

Why Should Enterprise Buyers Watch Meta’s Internal Fallout?

This blog is not only about model quality. It is also about vendor confidence. Enterprise buyers do not just evaluate what a model can do today. They evaluate whether the organization behind it feels stable and dependable.

What did Meta’s restructuring signal to buyers?

Reuters reported in June 2025 that Meta reorganized its AI efforts under a new division called Meta Superintelligence Labs, led by Alexandr Wang after Meta’s $14.3 billion Scale AI deal. Reuters later reported that Meta cut around 600 roles in that AI unit.

Those moves do not prove Llama 4 is weak, but they do create uncertainty around continuity, priorities, and roadmap confidence after a troubled launch cycle. For enterprise buyers, that creates real uncertainty around continuity, priorities, and how confidently the roadmap can be trusted.

Why does roadmap confidence matter in enterprise AI?

Adopting a model is not a one-time choice. It often involves fine-tuning, security review, evaluation pipelines, and internal stakeholder buy-in. All of that depends on confidence in the vendor’s direction.

That is one reason Llama 3.1 still looks attractive. It feels like a known generation. Llama 4, to some buyers, still feels like a generation trying to recover its narrative.

Where Does Llama 4 Clearly Win?

- Native multimodality: Llama 4 handles both text and image input natively, making it far more useful for visual assistants, document analysis, and richer AI workflows.

- Stronger multilingual reach: Meta says Llama 4 was pre-trained on 200 languages, with 100+ languages getting over 1 billion tokens each, far beyond Llama 3.1’s 8-language support.

- Broader official benchmark strength: public Llama 4 model-card results show Maverick ahead of Llama 3.1 405B on benchmarks including MMLU, MMLU-Pro, MATH, MBPP, LiveCodeBench, and GPQA Diamond. Keep ‘official benchmark strength’ explicit so readers do not confuse it with the separate trust controversy around the experimental LM Arena variant.

- Better fit for advanced use cases: Llama 4 is broader and often stronger, especially for teams that need multimodality, scale, and wider capability, despite Llama 3.1’s simplicity and stability.

Benchmark throughput, context handling, and serving cost on AceCloud-built GPU infrastructure for Llama inference, fine-tuning, and production deployment. Start today with Free Credits.

How Should Teams Evaluate Llama 3.1 vs Llama 4 on Their Own Stack?

This debate is best settled in your own environment, not on a public leaderboard. For teams, the practical test is whether the model improves real delivery outcomes.

What should technical teams actually measure?

Measure both models on your own repos, prompts, and workflows. Track instruction adherence, edit failure rate, debugging overhead, time to first token, throughput, and cost per resolved task. If your product does not truly need multimodality or very long context, those headline capabilities should not outweigh workflow quality.

Why does this matter more than benchmarks alone?

Benchmarks show broad capability. Production testing shows fit. A model that scores higher overall can still be the worse choice if it creates more rework, higher infrastructure complexity, or lower confidence in repeated coding tasks. That is why the best model for your team is the one that performs best on your stack, not the one with the loudest launch.

Which Model Should Teams Actually Choose?

- Choose Llama 3.1 when the job is mostly text and code, when ecosystem maturity matters, and when your team values stable behavior over ambitious feature expansion. It is often the safer fit for coding assistants, internal tools and cost-sensitive production environments.

- Choose Llama 4 when you need multimodality, broader official benchmark strength, and materially larger context ambitions than Llama 3.1. But validate the actual context limit, VRAM footprint, quantization path, and serving stack on your chosen platform, because hosted deployments may expose lower context windows than the headline model-level claim. For the right workloads, those advantages are real and strategically important.

Ready to Choose the Right Llama Stack for Production?

Whether you choose Llama 3.1 for stable, text-first coding workflows or Llama 4 for multimodal, long-context workloads, the smartest decision comes from testing both against your real infrastructure, prompts and delivery goals.

The right model is not the one with the loudest benchmarks. It is the one that improves reliability, speed, and cost efficiency for your team. If you are ready to evaluate, deploy or scale Llama workloads with confidence, AceCloud can help you benchmark faster, optimize GPU performance and move from experimentation to production with clarity.

Explore AceCloud to build your AI stack with confidence, control and clarity at every stage today.

Frequently Asked Questions

Some teams value stability and predictable instruction following because it reduces rework in iterative coding edits. When a model stays aligned with constraints, you can review and merge faster, which improves end-to-end engineering throughput.

Not necessarily, since Llama 4 targets multimodality, MoE efficiency and very large context, which can be valuable. The ‘worse’ perception usually reflects a mismatch between model strengths and a specific coding workflow.

Speed depends on model size, serving stack and whether MoE routing improves your throughput for your prompts. You should measure time to first token and time per resolved task, since raw tokens per second can mislead.

The best production model balances quality, infrastructure cost and operational simplicity for your workload and constraints. You can make that decision defensible by testing against your own repos and tracking failure rates over time.

Upgrade when Llama 4’s strengths map to clear requirements like multimodal inputs or larger context needs. Otherwise, Llama 3.1 can remain the more practical option if it already meets quality, cost and stability targets.