Cloud GPU for AI has become the backbone of modern high-performance computing, especially for training and deploying machine learning models. The combination of GPU acceleration and cloud infrastructure delivers unmatched scale, flexibility and performance for AI and ML workloads.

Today, high-performance computing (HPC) combined with GPU acceleration is powering breakthroughs across industries ranging from healthcare to finance. Instead of buying expensive hardware, businesses can rent these powerful GPUs on demand. This approach makes high-performance computing (HPC) accessible to everyone.

As per the recent survey, the global high performance computing market was estimated at USD 50.02 billion in 2023 and is expected to increase at a CAGR of 9.2%, from USD 54.39 billion in 2024 to USD 109.99 billion by 2032.

In this blog, we will explain how cloud GPUs accelerate AI/ML, why they matter for HPC workloads and how they redefine the future of computing.

Discover how Intellekt AI scaled efficiently, reduced spend and improved model delivery with AceCloud GPUs.

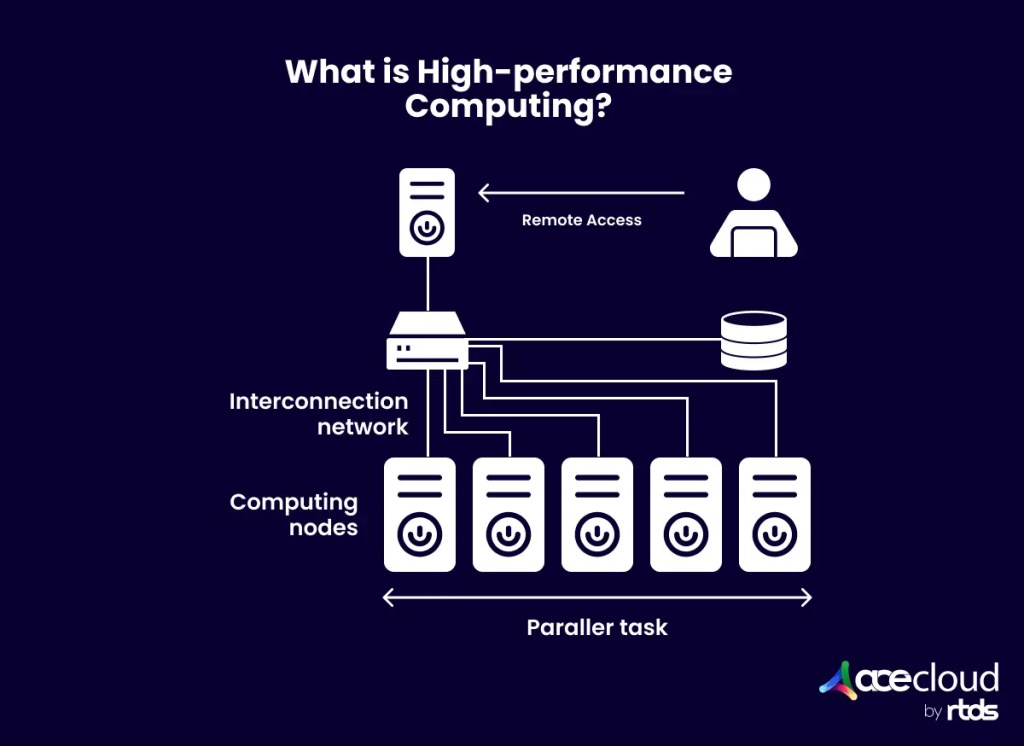

What Is High-Performance Computing with Cloud GPUs?

High-Performance Computing (HPC) is the technique of solving complicated problems by utilizing enormous amounts of parallel processing power. Traditionally, HPC depended on on-premise clusters containing CPUs and specialized infrastructure. However, current workloads necessitate increased throughput and shorter training durations. This is where GPUs outperform CPUs, as they can handle thousands of parallel processes.

Cloud GPUs for AI combine GPU parallelism with the elasticity of cloud computing. Instead of purchasing actual servers, organizations can rent GPU-powered nodes on demand. The cloud-based HPC AI infrastructure enables developers, data scientists and organizations to efficiently train their large-scale AI models. This method reduces the complexities of hardware purchase, power management and maintenance.

Why Cloud GPUs Matter for AI and ML?

Let’s explore why Cloud GPUs have become indispensable for modern AI initiatives.

Acceleration Beyond CPUs

Cloud GPUs deliver parallel computing power that CPUs cannot match, slashing model training from weeks to hours. This speed boosts productivity, enabling faster experiments, quicker iteration and rapid deployment. For businesses in competitive fields like healthcare, finance and autonomous systems, cloud GPUs offer immediate access to high performance without heavy infrastructure costs, turning faster innovation into a decisive advantage.

Scalability and Cost Efficiency

Cloud GPUs also provide flexibility unmatched by traditional infrastructure. Teams can scale GPU resources up during training spikes and scale them down afterward. This on-demand model ensures organizations pay only for what they use, avoiding wasted capacity. Access to the latest GPU models through cloud providers eliminates the need for frequent hardware upgrades.

Scale your inference and training with AceCloud’s on-demand H100 clusters, built for performance.

Collaboration Across Teams

Another key advantage is collaboration. Cloud GPU environments allow global teams to share datasets and work on models seamlessly. Data scientists, ML engineers and developers across locations can access the same resources in real time. This shared accessibility enables continuous development without infrastructure bottlenecks.

Driving Innovation Across Industries

Cloud GPUs are not just technical accelerators; they are enablers of industry-wide transformation. From precision medicine to fraud detection and autonomous vehicles, organizations rely on GPU-powered AI to solve challenges once considered impossible. With cloud computing, businesses across all sectors can innovate faster, reach markets sooner and deliver solutions that reshape entire industries.

Access to the Latest Accelerators

Organizations need the ability to tap into the newest GPUs like NVIDIA H100 without lengthy procurement cycles. Renting offers instant access to cutting-edge accelerators enabling teams to experiment, train models and deploy solutions quickly. This flexibility removes hardware bottlenecks, reduces time-to-market and ensures AI teams always work on the most advanced infrastructure available.

Security & Compliance at Speed

Modern AI workloads require strong data protection and regulatory adherence without slowing innovation. Cloud GPU platforms provide encryption, network isolation and compliance-ready environments from day one. Teams can securely run sensitive workloads while meeting local regulations such as data residency with confidence. This approach combines speed and security allowing businesses to innovate without risking governance gaps.

Sustainability

Running AI infrastructure efficiently is key to reducing environmental impact and energy costs. Renting GPUs lets businesses consume compute only when needed minimizing idle resources. Providers invest in optimized data centers, efficient cooling and renewable energy initiatives allowing customers to align AI operations with sustainability goals. This approach supports green growth while meeting performance requirements for modern workloads.

How to Choose the Best GPU Cloud Provider?

Selecting a GPU cloud provider is a pivotal step once you decide to embrace HPC with cloud GPUs. The right partner can unlock performance, scalability and cost savings while the wrong one can cause delays and overruns.

To make an informed decision, you need to balance performance, cost and risk using a structured checklist.

Step 1: Research the Vendor

The process begins with vendor research because proof speaks louder than promises. By reviewing case studies, customer testimonials and third-party reliability reports, you can separate genuine providers from those relying only on marketing claims. Platforms like G2 help validate these insights further and give you confidence in the provider’s credibility.

Step 2: Assess Infrastructure and Performance

Once you narrow down vendors, the next step is to evaluate infrastructure. This means confirming whether the provider’s data centers meet Tier III or Tier IV standards and whether they offer the GPU models your workloads require. Details like VRAM size, NVLink interconnects, InfiniBand speeds and QoS policies directly affect performance. Requesting benchmarks ensures you know how the environment performs under real-world loads before committing.

Step 3: Locate for Latency and Data Gravity

Performance also depends on geography. Choosing regions close to your users and data minimizes latency and improves responsiveness. At the same time, checking the provider’s backbone, peering and private connectivity ensures smooth data flow between your cloud workloads and existing environments.

Step 4: Cost Transparency

Infrastructure and performance matter, but cost visibility also matters. Comparing on-demand, reserved and spot pricing helps identify the most suitable model. However, do not stop at hourly rates. Factor in egress fees, storage charges and support plan costs. Billing granularity and idle charges can also make a big difference over time.

Run your workloads confidently with simple pay-as-you-go pricing and zero hidden fees.

Step 5: Evaluate Support Depth

Even with the right infrastructure and pricing, your experience depends heavily on support. Look for 24×7 availability with SLAs, direct escalation paths and expertise in GPUs and Kubernetes. Onboarding help, migration assistance and the option of a dedicated technical account manager can ease adoption and reduce friction.

Step 6: Verify Security and Compliance

Security is non-negotiable and your provider must demonstrate multilayer controls across network, endpoint and admin levels. Certifications like ISO 27001 and SOC 2 Type II assure while features such as CMEK, audit logs and VPC isolation give you control over sensitive data. Data residency and deletion guarantees should also align with your compliance requirements.

Step 7: Plan for Capacity and Scale

Popular GPU models often face supply shortages, so check the provider’s ability to guarantee capacity. Reservation options, burst pools and multi-region availability signal readiness to support your growth. Equally important is their roadmap, ensuring they can deliver the next generation of GPUs when you need them.

How to Optimize Costs for Cloud GPU for AI/ML

Optimizing costs for cloud GPU servers requires choosing the right strategy for each workload type. Below is a quick comparison to help you decide.

| Strategy | Best For | Advantages | Considerations |

|---|---|---|---|

| Spot Instances | Fault-tolerant tasks (batch inference, distributed training, model experimentation) | Lowest cost option with savings up to 70–80% compared to on-demand pricing | Risk of termination without notice, requires checkpointing or restart mechanisms |

| Hibernation | Long-running or iterative jobs (training sessions, debugging, workflows spanning multiple days) | Avoids paying for idle GPUs, resumes workloads without a full restart | May incur storage costs for saving VM state, not available on all providers |

| Reserved Capacity | Predictable, ongoing workloads (continuous retraining, production inference, scheduled tasks) | Guaranteed GPU availability at lower hourly rates, ideal for enterprise stability | Requires upfront commitment, less flexibility if workloads change unexpectedly |

GPU-based HPC Use Cases

GPU-based HPC enables industries to solve complex challenges faster by harnessing massive parallel computing power. These use cases highlight how GPUs accelerate innovation across AI, life sciences, finance and engineering with unmatched performance and efficiency.

Deep Learning Training

GPUs deliver the parallel computing power required to train deep learning models efficiently. They reduce training time from weeks to hours, enabling researchers and engineers to iterate quickly, improve accuracy and deploy AI systems faster in production environments.

Natural Language Processing

From large language models to speech recognition systems, GPUs accelerate training and inference across NLP workloads. They process billions of parameters simultaneously, powering translation, summarization and conversational AI applications with high accuracy and low latency for real-time interactions.

Computer Vision and Imaging

GPUs support image classification, video analytics and object detection at massive scales. Industries use them for autonomous vehicles, industrial automation and healthcare imaging, where fast, accurate recognition of visual data directly impacts safety, efficiency and quality outcomes.

Genomics and Drug Discovery

In life sciences, GPUs accelerate sequence analysis, protein folding simulations and drug candidate screening. Researchers run highly complex models faster, reducing time to insights. This acceleration directly supports breakthroughs in precision medicine, pandemic response and pharmaceutical innovation worldwide.

Financial Modeling

Financial institutions rely on GPUs for real-time risk modeling, fraud detection and portfolio optimization. Monte Carlo simulations and complex pricing strategies run at scale, allowing banks and fintech firms to make faster, data-driven decisions with reduced operational and compliance risks.

Power Your AI Journey with AceCloud

Cloud GPU for AI is no longer a luxury but a necessity for organizations aiming to scale their AI and ML workloads effectively. By combining HPC AI infrastructure with GPU machine learning, teams gain the speed, flexibility and collaboration needed to innovate faster. With the right cloud partner, you can simplify deployment, control costs and focus on building impactful solutions instead of managing infrastructure.

AceCloud empowers AI engineers, research labs and AI/ML startups with powerful cloud compute designed to meet real-world demands. Whether you need GPUs for training, inference or large-scale simulations, we deliver unmatched performance with reliability and security.

Get started with AceCloud today to experience how purpose-built GPU cloud solutions transform AI initiatives from idea to production.

Recommended Read: GPU vs. CPU: Which One Is Best for High-Performance Computing (HPC)?

Frequently Asked Questions:

Cloud GPU for AI delivers on-demand accelerators for training and inference without buying hardware. It combines GPU Machine Learning performance with elastic Cloud Compute for ML, so teams scale quickly, shorten experiments, and control spend. For HPC AI Infrastructure, it removes capacity limits and speeds time to usable models.

Choose Cloud GPU for AI when workloads are bursty, timelines are tight, or hardware is scarce. You start fast, scale up or down, and pay only for use. Pick on-prem only when utilization is near 24×7, data must stay in a specific facility, or custom networking is mandatory.

Cloud GPU for AI excels at large language models, vision, recommendation, and speech. It also speeds data science pipelines, simulations, and analytics inside HPC AI Infrastructure. If the job needs massive parallelism or short training cycles, cloud GPUs deliver the best turnaround.

Start with model size, sequence length, and target throughput. Map VRAM needs to batch size, then check tokens per second per GPU for your framework. Add headroom for data loading and networking. For Cloud Compute for ML, test a small cluster first, then scale linearly.

Use mixed precision, efficient kernels, and better batch scheduling. Right-size instances, place data close to compute, and cache features. Combine spot, reserved, and on-demand capacity. Track utilization and tokens per dollar so Cloud GPU for AI stays fast and financially efficient.