Quick Summary: As of January 2026, the NVIDIA H200 price in India ranges from ₹28 lakhs to over ₹1 crore (₹99 lakhs) per unit, depending on configuration and vendor. If you prefer to rent, AceCloud offers H200 capacity at ₹378 per hour.

Are you looking for a GPU to leverage high performance computing for your workloads?

NVIDIA H200 is one of the most powerful GPUs with HBM3e memory design to handle foundation model training, inference and real-time GenAI workloads.

However, when it comes to the NVIDIA H200 price in India, you’re likely facing a critical decision whether to rent or buy. Well, the answer depends on your workload size, compliance requirements, business model and budget.

For most startups, AI labs and deep learning teams, H200 GPU rental is the smart move. It offers power without the capital lock-in.

But if you’re scaling aggressively or building an AI infrastructure, buying it may provide better long-term ROI.

In this blog, we will break it all down: H200 specs, pricing models, rental options and buying scenarios in India.

Key Specifications of the H200 GPU

The NVIDIA H200 GPU is designed to meet the escalating demands of modern AI and high-performance computing (HPC).

As the latest evolution of the Hopper architecture, the H200 takes a major leap beyond its predecessor by introducing faster memory and substantially higher data throughput.

It’s tailored for teams working on billion-parameter AI models, real-time data analytics or scientific simulations.

Whether you’re working on foundation model training, multi-modal AI or high-throughput scientific simulations, the H200 accelerates performance across the board.

Its ability to handle long sequences and large batches makes it the go-to GPU for LLMs, RAG systems, and vertical-specific AI deployments

Memory

141 GB of ultra-fast HBM3e memory, upgraded from the H100, to accommodate longer context windows and larger model checkpoints.

Bandwidth

Blazing-fast 4.8 TB/s memory bandwidth, minimizing layer-to-layer bottlenecks during both training and inference.

Precision

Support for FP8, FP16 and BF16 precision formats, powered by NVIDIA’s Transformer Engine, perfect for balancing speed and accuracy.

Architecture

Built on the NVIDIA Hopper platform, optimized for transformer-heavy workloads and low-latency model inference.

This combination of memory, bandwidth and precision is designed to cut inference time, lower compute costs and accelerate AI development cycles.

NVIDIA H200 GPU – Buy vs Rent (Cost Comparison Table)

Here’s a dedicated cost comparison table for the Model Inferencing workload using the NVIDIA H200 GPU, showing both renting vs. buying scenarios across different usage scales.

| Cost component | Own & run on-prem (12-mo amort.) | Rent – Cloud GPU Provider in India (~ AWS p5e.48xlarge) |

|---|---|---|

| Capital / depreciation | ₹ 2.95 M* ÷ 12 mo = ₹ 2,89,000 / mo | – |

| Maintenance & spares (10 % / yr) | ₹ 29,400 / mo | – |

| Power – GPU draw (600 W) | 0.6 kW × ₹8 × 730 h = ₹ 3,504 | included |

| Power – cooling / PUE 1.5 | 0.3 kW × ₹8 × 730 h = ₹ 1,752 | included |

| Rack / colocation (2-4 U, 1 kW) | ₹ 15,000 | included |

| Admin / monitoring | ₹ 5,000 | included |

| AWS GPU compute | – | 730 h × $4.326 × ₹84 = ₹ 2,65,270 |

| EBS gp3 storage (1 TB) | – | $0.08 × 1,024 GB × ₹84 = ₹ 6,880 |

| Data egress (2 TB) | – | 2,048 GB × $0.02 × ₹84 = ₹ 3,440 |

| TOTAL per month | ₹ 3,48,656 | ₹ 2,75,590 |

| Effective ₹ / GPU-hour | ₹ 478 | ₹ 378 |

Key Takeaways –

- Renting is ~21% cheaper per month (₹2.75L vs ₹3.48L), making it cost-effective for short-term or variable workloads.

- Lower ₹/GPU-hour when renting (₹378 vs ₹478), ideal for high-utilization inference tasks.

- Upfront capital and maintenance costs are eliminated with cloud GPU rental.

- Power, cooling and admin overheads are bundled in rental, simplifying ops.

- Buying suits long-term, stable use if infrastructure is already in place.

Looking for the NVIDIA H100 prices? Check our detailed guide on NVIDIA H100 Price in India to compare costs and see if the H100 meets your AI workload requirements at a lower price point.

Where to Rent NVIDIA H200 in India?

Locating a reliable source to purchase or rent the NVIDIA H200 in India can be challenging, especially given the ongoing global demand and constrained supply.

Whether you plan to buy the H200 in India for long-term infrastructure or prefer the flexibility of GPU rental, choosing the right vendor is critical to ensuring performance, support and ROI.

If you’re looking for flexibility and immediate compute power, rental will be the best bet.

1. AceCloud

AceCloud provides seamless access to NVIDIA H200 GPUs through a fully cloud-based platform. Designed for Indian AI/ML startups, R&D teams and enterprise AI innovators, AceCloud provides real-time provisioning, so users can spin up H200 instances within minutes. No procurement delays. No infrastructure complexity.

With usage-based pricing, teams only pay for what they use, making it a cost-effective option for both short-term experimentation and long-term AI model training. The platform also includes enterprise-grade security, ensuring data compliance and protection across all workloads.

Key Features

Pay-as-You-Go Pricing Model

We offer a clear, pay-as-you-go pricing model that helps you control costs while running AI/ML workloads efficiently. You only pay for what you use, no hidden fees or overcommitments.

Indian Data Centers

Our India-based data centers are strategically positioned to deliver low-latency performance, regulatory compliance and high uptime. Whether you’re a startup or an enterprise, you can confidently run data-sensitive workloads while meeting local data residency requirements.

24/7 Expert Technical Support

Our expert support engineers are available 24/7 to help you with GPU configuration, workload deployment and issue resolution. We work proactively to ensure your projects stay on track with no unexpected downtime or disruptions.

Enterprise-Grade Security and Compliance

We secure your workloads with end-to-end encryption, isolated networking and a compliance-ready cloud environment. From data protection to governance, our infrastructure meets the security standards demanded by enterprise AI and ML deployments.

Rapid and Simple Deployment

Deploying GPU-powered workloads is effortless with our one-click provisioning. Your AI, ML or DevOps teams can go from concept to production in minutes. No complex setup. No delays.

Extensive GPU Portfolio

Select from a wide variety of NVIDIA GPUs including H200 (latest), A100, H100, H200, L4, L40S, RTX 8000, RTX A6000, A30 and A2. Whether you’re handling deep learning, generative AI or HPC workloads, we offer the right GPU for your needs across all performance levels and budgets.

High-Speed NVMe Storage

Our NVMe-based block storage ensures your GPU workloads run at top speed. With high IOPS, low latency and consistent throughput, you can accelerate training on large datasets and enable real-time AI inference without bottlenecks.

2. E2E Networks

E2E Networks is another reliable Indian provider offering pay-per-use GPU instances, including support for cutting-edge accelerators like the H100 and soon the H200.

Known for its cost-effective pricing, E2E Networks is especially popular among early-stage startups and academic users who need reliable performance without navigating the complexities of hyperscale billing.

The platform is simple to use, developer-friendly and allows users to run machine learning workflows, inference models and analytics pipelines without long-term commitments.

E2E’s infrastructure also benefits from Indian data center locations, offering low-latency access and regulatory compliance.

3. Linode

While Linode is traditionally recognized for its CPU-based cloud compute services, it has gradually entered the GPU hosting space. However, H200 availability remains limited, and its GPU offerings are more suited for smaller-scale workloads or experimentation.

Pricing is competitive, but support for advanced AI frameworks or high-bandwidth memory like HBM3e may not be readily available.

4. Hyperscalers (AWS, Azure and Google Cloud)

Hyperscalers like AWS, Microsoft Azure and Google Cloud also plan to support NVIDIA H200 instances. However, regional latency, data egress costs and complex pricing tiers can add friction for Indian users.

Additionally, data locality and compliance requirements may make these platforms less suitable for sensitive or regulated AI applications.

For teams based in India, these platforms may serve well for global deployment, but local GPU providers often offer better performance-to-cost ratios.

Where to Buy NVIDIA H200 GPU in India?

For enterprises with consistent AI workloads and robust in-house infrastructure, buying the NVIDIA H200 GPU in India represents a strategic long-term investment. It eliminates recurring rental costs and gives organizations full control over performance, customization and data locality.

You can source H200 GPUs through multiple channels, most reliably via authorized NVIDIA OEM partners such as Dell, HP, Lenovo and Supermicro. Additionally, enterprise IT resellers and hardware procurement firms often offer flexible financing and integration services.

For quicker comparisons or urgent needs, platforms like Mouser Electronics or the NVIDIA Partner Network list available units from verified sellers.

Before finalizing your purchase, it’s essential to evaluate key factors such as verified stock availability, warranty coverage, shipping timelines, and post-sale support.

Given the capital-intensive nature of these GPUs, even a short delivery delay or inadequate support response can disrupt mission-critical AI initiatives. A well-informed procurement strategy will mitigate risks and accelerate ROI.

What are the Key Factors to Consider While Buying or Renting H200 GPUs?

Here are a few key factors that you need to consider while buying or rent NVIDIA H200 GPUs:

Performance Requirements

Start by evaluating the computational needs of your workload. If you’re running model inferencing, fine-tuning LLMs or handling large-scale generative AI, the H200’s memory bandwidth and tensor performance can dramatically reduce training and inference time.

Budget and ROI

Consider your financial flexibility. Renting allows you to access high-end GPUs without upfront CAPEX. However, if your GPU usage consistently exceeds 200–250 hours/month, buying can deliver better ROI in the long run.

Workload Scalability

For projects that scale dynamically, such as multi-tenant SaaS or seasonal AI workloads, renting provides elastic capacity. But for predictable, always-on production workloads, ownership may be more cost-effective.

Operational Overhead

Buying means handling hardware maintenance, cooling, power and physical security. Renting offloads all that to the provider, allowing teams to focus entirely on innovation.

Compliance and Data Residency

Ensure the infrastructure aligns with regulatory and data locality needs, especially when working in finance, healthcare or government sectors.

Rent or Buy the NVIDIA H200 in India?

Whether you’re scaling foundation models or experimenting with GenAI, the NVIDIA H200 GPU delivers the performance edge you need. For most teams in India, renting H200 GPUs unlocks flexibility, cost-efficiency and instant scalability without tying up capital.

If your AI workload demands consistent, high-throughput compute, buying the H200 could be a strategic long-term investment. At AceCloud, we make H200 adoption easy, whether you want on-demand access or dedicated monthly clusters.

Plus, our India-based data centers, transparent pricing and 24/7 expert support ensure your workloads stay fast, secure and compliant.

Need help choosing between renting or buying? Connect with our cloud GPU specialists today to get a custom TCO analysis tailored to your use case.

Frequently Asked Questions:

The NVIDIA H200 GPU is the latest upgrade in the Hopper architecture family. It is purpose-built for extreme AI and high-performance computing. It succeeds the H100 by introducing faster memory and better overall throughput that make it ideal for large language models, foundation model training and generative AI inference.

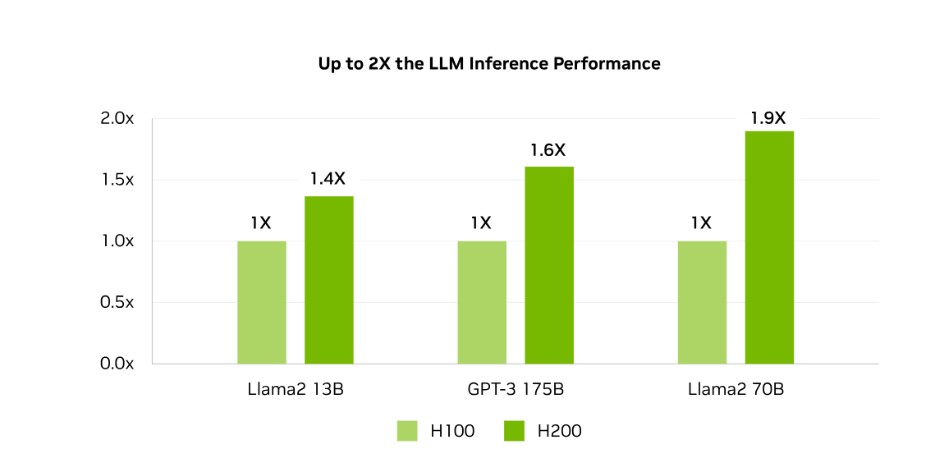

The NVIDIA H200 GPU delivers higher memory bandwidth and faster HBM3e memory than H100, improving GenAI training and inference speeds.

Yes, the H200 GPU supports PyTorch, TensorFlow and other major AI frameworks without requiring code refactoring or custom tooling.

Yes, some cloud providers and incubators offer credits or grants to Indian startups for H200 GPU access via research or accelerator programs.

H200 GPUs require high-bandwidth networking like NVLink or Infiniband for efficient distributed training across multiple nodes.

H200 GPUs remain a top-tier choice for current GenAI use cases. Renting H200 offers flexibility until B100 becomes widely available.